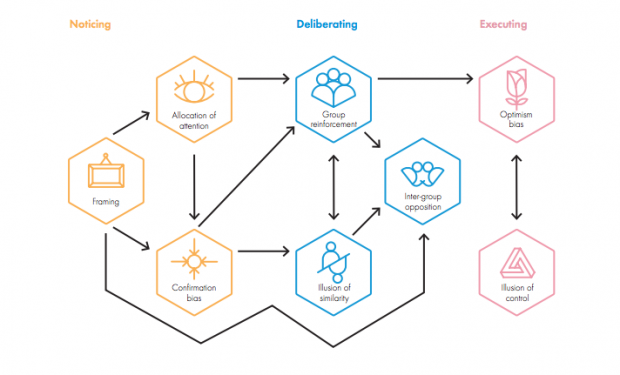

The Behavioural Insight Team’s latest report, Behavioural Government, argues that decisions of policy-makers are affected by cognitive bias (p.7). BIT identify eight of the most common cognitive biases in government which they categorise into three areas: noticing, deliberating, and executing. The report proposes several strategies to mitigate these behaviours which, after mapping their relationship with our tools and techniques, I believe are already implicit in Policy Lab’s processes. In the following three blogs, I dig into each of the three categories and share how policy-makers can, and already are, using experimental approaches and practical tools to ‘bust biases’.

Cognitive bias occurs when an individual's perception of reality is at odds with objective reality. Since the 70s, economists have been drawing from psychology to describe, but not explain, why people deviate from the homo economicus ideal - making decisions that are rational, utility maximising and based on full information. In reality, humans behave differently. The ‘biases’ have evolved with us, and are often very effective ways to think fast and deliver at pace. However, it is important to be aware of them as, in a policy making context, biased decision making can unnecessarily reduce the wellbeing of citizens and lead to inefficient use of taxpayers’ money.

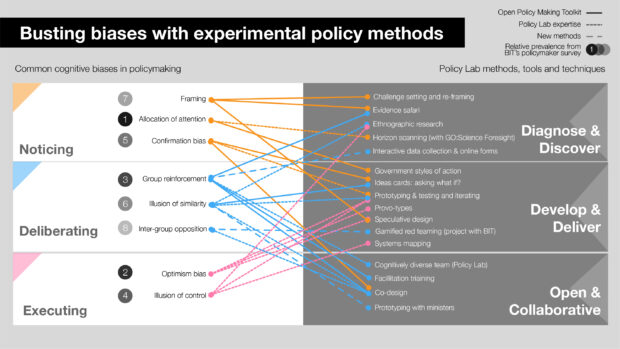

As a policy designer, I regularly refer to the Behavioural Insight Team’s EAST framework (Easy, Attractive, Social, Timely) alongside traditional design ethnography (observing how humans behave when interacting with policies, services or products) when making prototypes. But until reading BIT’s latest report, I hadn’t fully appreciated the connection with Policy Lab’s wider activities. To see the relationships, I decided to map the open policy making tools, project expertise and our newest methods against the eight most common policy-maker biases (according to experienced policy professionals, surveyed by BIT, 1 is the most prevalent and 8 the least).

This mapping shows that a bias can’t be bust by a single tool, but a single tool can help bust multiple biases. Depending on the type of problem and its stage of development, a tailored combination is needed.

In this first blog, I’m looking at noticing: here’s how you could overcome the framing effects, the (mis)allocation of attention, and confirmation bias.

Framing and reframing

The framing of challenge questions has a powerful effect on how policymakers approach problems and what information they consider to be relevant (we’re only human). In 2015, Ipsos MORI asked the public about changing the voting age. They found that the majority verdict flipped depending on whether people were asked if they supported ‘reducing the voting age from 18 to 16’ (37% for, 56% against) or ‘giving 16 and 17 olds the right to vote’ (52% for, 41% against). The first option does not include a reason for the adjustment, making it appear riskier, while the second argument is framed in terms of rights. Being aware of these framing effects is crucial in any policy-making.

To reduce bias, BIT recommend using reframing strategies to “help actors change the presentation or substance of their position in order to find common ground and break policy deadlock” (p.11).

Policy Lab’s ‘How can we’ cards offer one method of doing that. We normally start by asking everyone to write their own version of the challenge. Then, through a process of swapping, synthesis and iteration we seek to agree an initial shared challenge statement. But this is just a starting point and we will return again and again to the question as we collect new data and different perspectives. After initial research, the focus of a recent project on digital forensics shifted from increasing awareness amongst juries and judges to improving digital forensic best practice among investigating officers.

Another method is to bring in speculation at an early stage. We often ask co-design participants to imagine a utopian and dystopian vision of a future policy problem. This activity can frame the same policy in terms of gains and losses. Loss aversion, the tendency to prefer to avoid loss than to acquire gains, means that participants are much more engaged when working to avoid dystopian visions of the future.

Allocation of Attention

The availability bias describes how an individual or institutional memory gravitates towards ‘tried and tested solutions’ in times of stress. This behaviour occurs a lot in busy hospitals where doctors select treatments which they have suggested to patients with similar symptoms, without always fully understanding the problem. During our co-design workshops, we find teams often tend towards solutions in two spaces: legislation and education. Whilst these are potentially very effective levers, they are not the only ones - availability bias means they’re often the ones policy-makers jump to.

To help address this, we developed the government styles of intervention matrix and card set. The levers are by no means exhaustive but provide structure and stimulation for the ideas processes. They can be used to work out which options are in and out of scope and are also very helpful when engaging with stakeholders and the public on policy issues. We used them at a Futures Lab in February, where over 70 stakeholders generated ideas on what the Department for Transport could do to maintain the UK as a world leader in maritime autonomy.

Confirmation bias

According to the report, policy-makers, just like everyone else, have a tendency to “seek out, interpret, judge and remember information in a way which supports one’s pre-existing views and ideas” (p.29). When researching a policy we may be drawn to familiar information that supports our current perspective and ignore alternative realities. Publicly, this phenomenon has been symbolised by the growth of echo chambers on social media, where users self-select information sources that fill their news feeds.

The very nature of co-design, of course, is to avoid the risk of confirmation bias by effectively creating the opposite of an ‘echo chamber’. In our sessions we invite a broad spectrum of stakeholders. A good start is for us all to examine the evidence together: interrogating it, challenging it and adding knowledge. Our evidence safaris are a great way of doing this, and where possible, we publish some of these evidence safaris for review and we ensure blank cards are available so that outside experts can provide new information and actively contribute to the process.

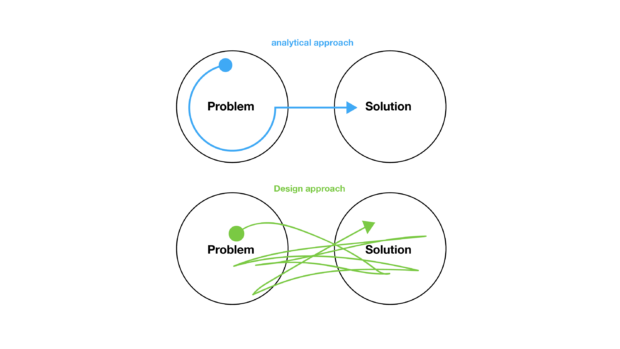

The design approach promotes iteration and testing, giving policy teams ‘break-points’ where they can allow both the problem and the solution to be re-evaluated. Fears of a u-turn can be reduced if ideas are treated as drafts, not commitments, and where feedback is received at an early stage.

Policy Lab’s approach and tools have been developed within the context of complex policy challenges. We hope that as we continue to test and refine them, they can play a part in busting some of those behavioural ghosts that haunt policy-makers.

Next time: deliberating